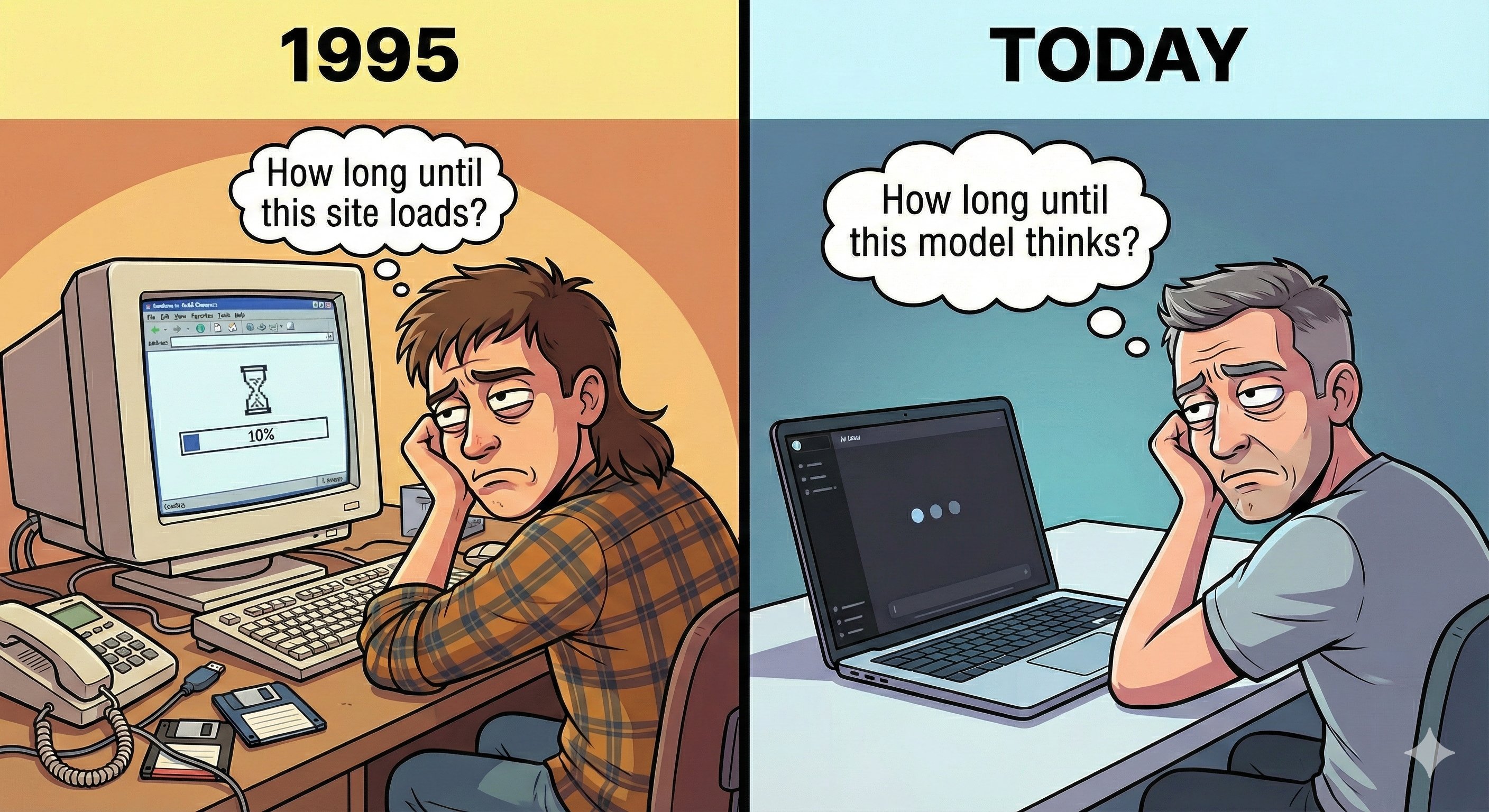

AI Today is Dial-Up

The state of AI today really reminds me of the internet in the 90s. Before broadband, the internet was mostly... chat. You'd type something, wait, get a text response. Sound familiar?

At 50–150 tokens/sec, AI is a chatbot. Everything we're building — the wrappers, UX patterns, business models — assumes that interaction model. But the hardware trajectory is pointing somewhere different. Groq, Cerebras, SambaNova, newer players like Taalas — all pushing into thousands of tokens/sec.

At that speed, AI stops being a chatbot. Just like broadband didn't make email faster — it created Netflix. Instead of ever-larger models that "know" the answer, you could run a small model thousands of times in parallel. Generate, mutate, verify, search at runtime. Interfaces that don't exist until you need them. Robotics untethered from the cloud.

But these are just examples I can think of today. The most interesting things broadband enabled weren't predicted in 1995. I suspect the same will be true here. One thing I do think is predictable: when generation becomes nearly free, the bottleneck moves to verification. When tokens are cheap, trust is expensive.