LLMs as Formulators, Not Optimizers

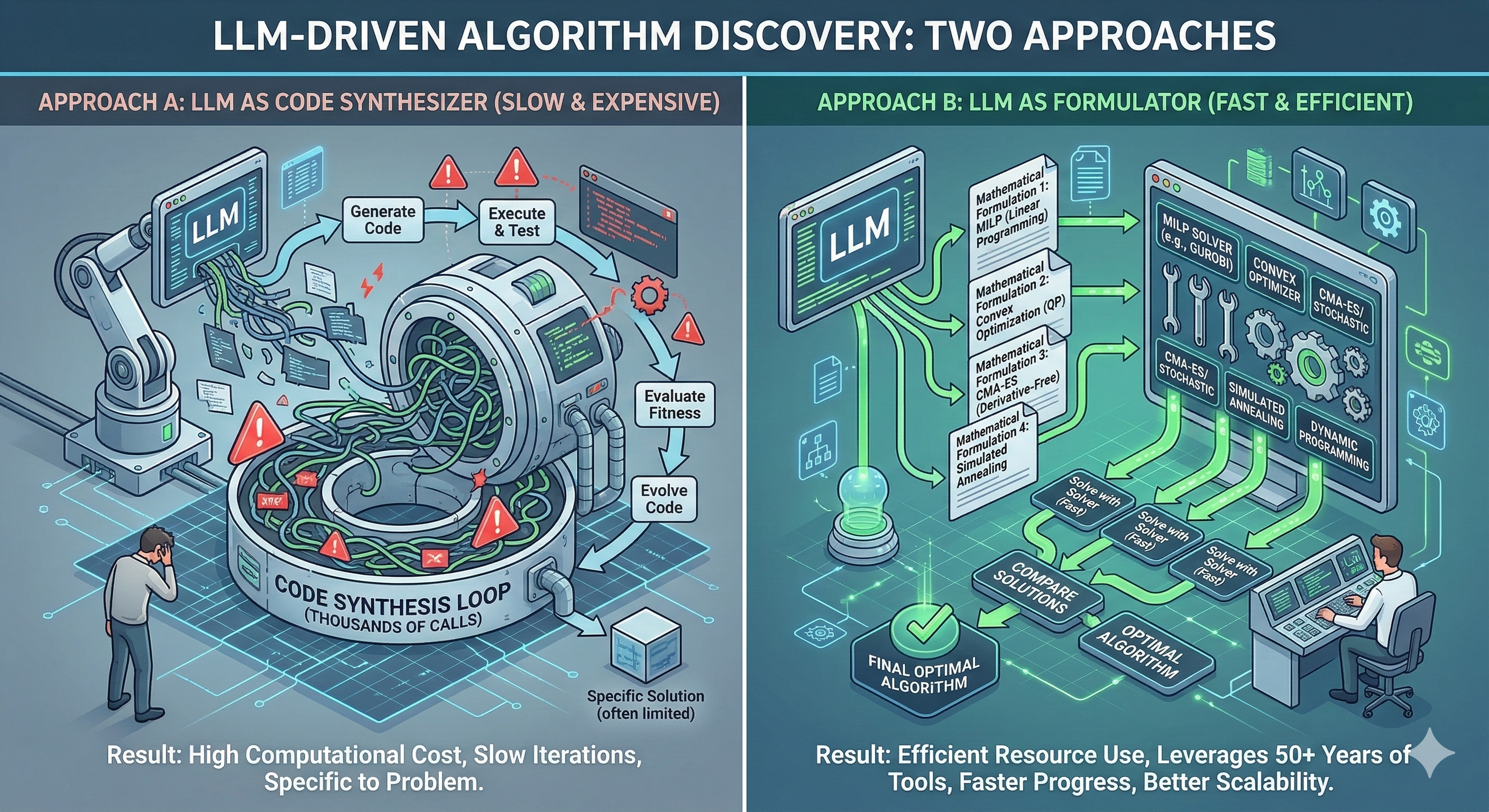

One thing keeps bothering me about program synthesis approaches like AlphaEvolve. The optimization is performed through code generation — the LLM generates code that implicitly performs the search. That can easily mean thousands of model calls just to explore the search space.

But we already have extremely powerful tools: convex optimization, MILP solvers, CMA-ES, simulated annealing, reinforcement learning, dynamic programming. We spent more than 50 years building this toolbox.

Instead of synthesizing programs that implicitly perform optimization, we could often do something simpler: LLM formulates the problem, classical optimizer solves it. This reminds me of a very common research pattern in electrical engineering about 10–15 years ago: model the problem, derive a tractable relaxation, solve it with a solver, bound the gap. The hard part was never solving. It was formulating the problem correctly. The same might be true for LLMs today.