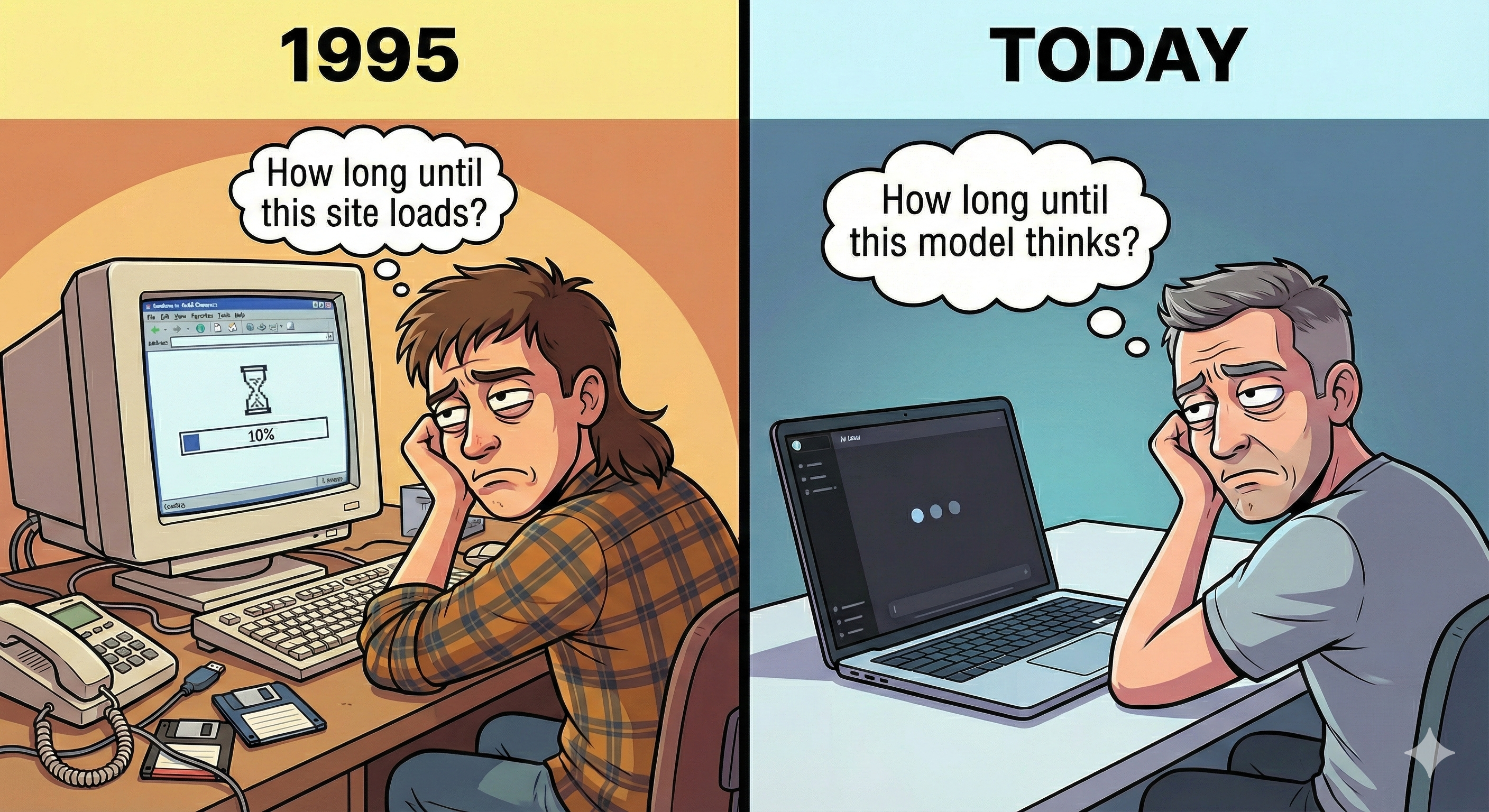

AI Today is Dial-Up

At 50–150 tokens/sec, AI is a chatbot. The hardware trajectory is pointing somewhere entirely different.

The Dial-Up Era of AI

The state of AI today really reminds me of the internet in the 90s.

Before broadband, the internet was mostly… chat. You’d type something, wait, get a text response. Sound familiar?

It’s not that people lacked vision. Dial-up couldn’t carry anything else. Nobody was planning YouTube on a 56k modem. The speed ceiling shaped what was even thinkable.

Then broadband arrived and enabled entirely new categories nobody predicted: streaming, social media, real-time collaboration. The speed itself was the unlock.

AI at the Inflection Point

I think AI is at exactly that inflection point. At 50-150 tokens/sec, AI is a chatbot. We type, wait, read. Everything we’re building (the wrappers, UX patterns, business models) assumes that interaction model.

But the hardware trajectory is pointing somewhere different. Groq is pushing into thousands of tokens/sec with their LPU architecture. Cerebras and SambaNova are in a similar range. Newer players like Taalas are going even further, hardwiring models directly into silicon and reporting ~17K tokens/sec per user.

(Early benchmarks, not all apples-to-apples. But the direction is clear: inference speed is scaling fast, cost per token is collapsing.)

At that speed, AI stops being a chatbot. Just like broadband didn’t make email faster. It created Netflix.

What Becomes Possible

Examples I keep thinking about:

Parallel search over memorization. Instead of ever-larger models that “know” the answer, run a small model thousands of times in parallel. Generate, mutate, verify, and search at runtime. In “More Agents Is All You Need” (Li et al., 2024), Llama-13B with 35 rollouts outperforms single-shot Llama-70B on GSM8K. 5x smaller, wins by searching instead of memorizing.

Ephemeral interfaces. Interfaces that don’t exist until you need them. At thousands of tokens/sec, a chip generates UI on the fly. Personalized, contextual, ephemeral.

Untethered robotics. A robot can’t wait 500ms for a cloud GPU. On-prem inference at this speed means real-time planning with tight control loops.

Mixture-of-LoRA-Experts. Add hot-swappable LoRA adapters on hardwired silicon and you get narrow specialists at silicon speed, each outperforming a single generalist. (Still early, open problems remain.)

The Unpredictable Future

But these are just examples I can think of today. The most interesting things broadband enabled weren’t predicted in 1995. I suspect the same will be true here.

One thing I do think is predictable: when generation becomes nearly free, the bottleneck moves to verification. When tokens are cheap, trust is expensive.

And just like broadband’s biggest winners weren’t the fiber companies but the ones who built Netflix and YouTube on top, the winners here probably won’t make the chips.

So: if inference becomes practically free and instantaneous, what’s the next trillion-dollar company that gets built on top?