The Sea Squirt Principle: When AI Learns to Shrink Itself

A useful sign of learning is not that a system thinks faster — it's that repeated situations require less explicit reasoning

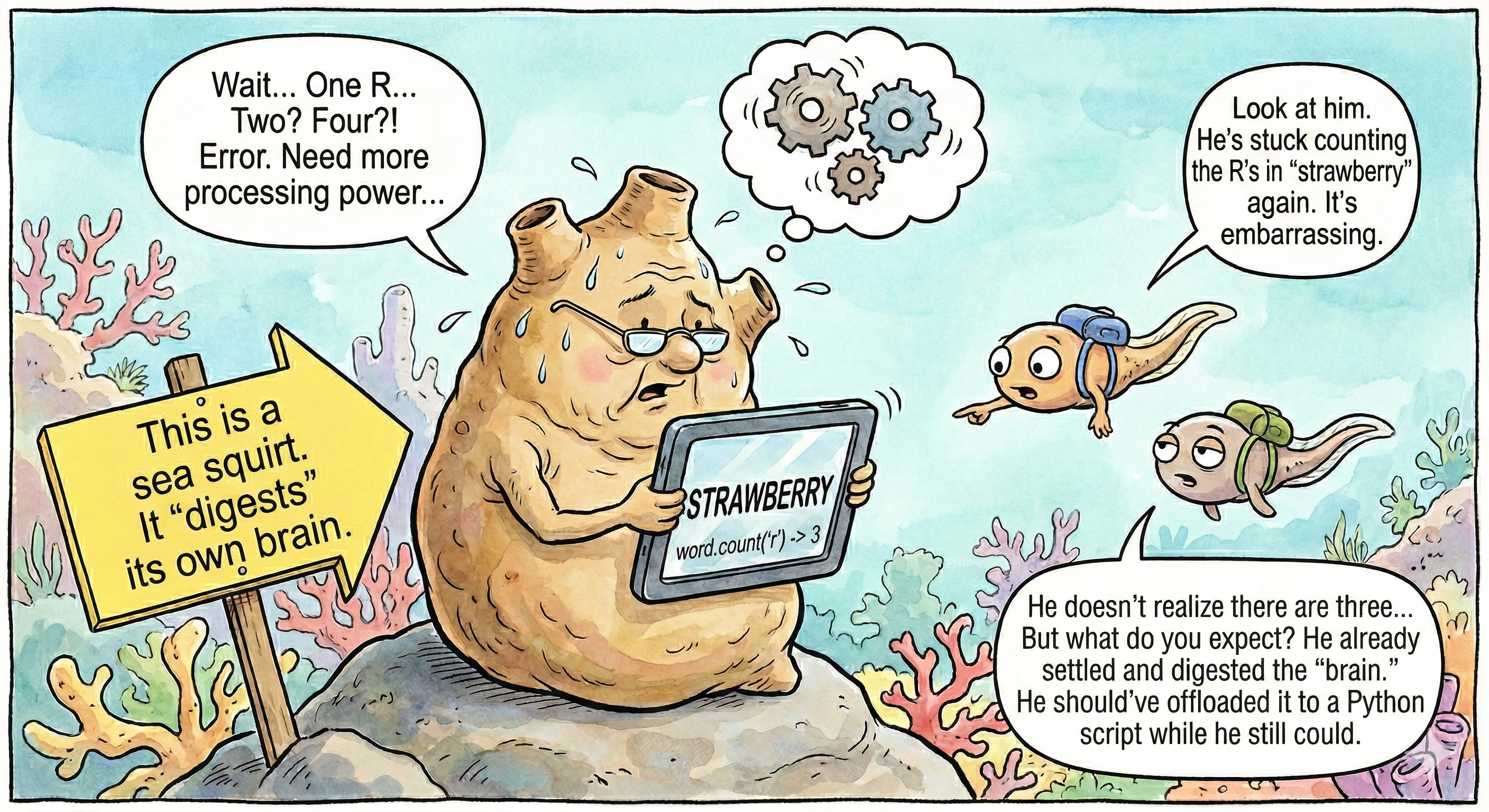

There’s an animal called the sea squirt that is often described (a bit dramatically) as “eating its own brain.”

Not literally a vertebrate brain, yes, I’m saying this now so the biologists don’t attack me in the comments.

But the core idea is fascinating: early on, it needs a nervous system to move, explore, and find where to attach. Once it settles, it no longer needs the same machinery in the same way.

I’ve been thinking about this as a learning principle.

Learning is not faster thinking

A useful sign of learning is not necessarily that a system thinks faster. It is that the same repeated situations require less explicit reasoning.

A chess master is not simply a beginner who calculates faster. In many positions, the master is not “calculating” in the beginner’s sense at all.

Much of what a beginner must think through move by move has already become pattern recognition, chunking, and stored procedure.

Cognitive offloading in LLM systems

I think there is a similar (and underappreciated) form of continual learning in LLM-based systems.

We usually frame continual learning as adapting weights. That is part of the story.

But another part may be this: one form of learning is an LLM making parts of its own role unnecessary by turning repeated reasoning into verified code and tools.

This matters because LLMs often have much stronger declarative knowledge than functional reliability. They may know the method before they can execute it consistently in direct generation.

The “strawberry” example

A well-known example is the “strawberry” question: an LLM may fail when directly asked how many “r”s are in “strawberry,” yet easily write a short program that counts characters correctly.

In that case, the code can outperform the model that wrote it.

That is not just optimization. It is knowledge being converted from description to reliable execution.

Building a system that shrinks itself

I’ve been exploring this idea in a system I’m building, where the model is not just a fallback router but a synthesis layer that creates local executors and gradually reduces its own role over time.

So when repeated LLM reasoning gets crystallized into verified tools and code, I think something more than efficiency is happening: the system is learning by shrinking the set of things that still require open-ended LLM reasoning.

Early results from this approach: 67% of tasks offloaded to deterministic code, same accuracy, a quarter of the cost.

The sea squirt doesn’t lose capability when it absorbs its nervous system. It transitions from exploration to exploitation. The interesting question is whether LLM-based systems can learn to do the same thing — not by forgetting, but by converting what they know into forms that no longer require them.