LLMs as Formulators, Not Optimizers

The hard part was never solving. It was formulating the problem correctly.

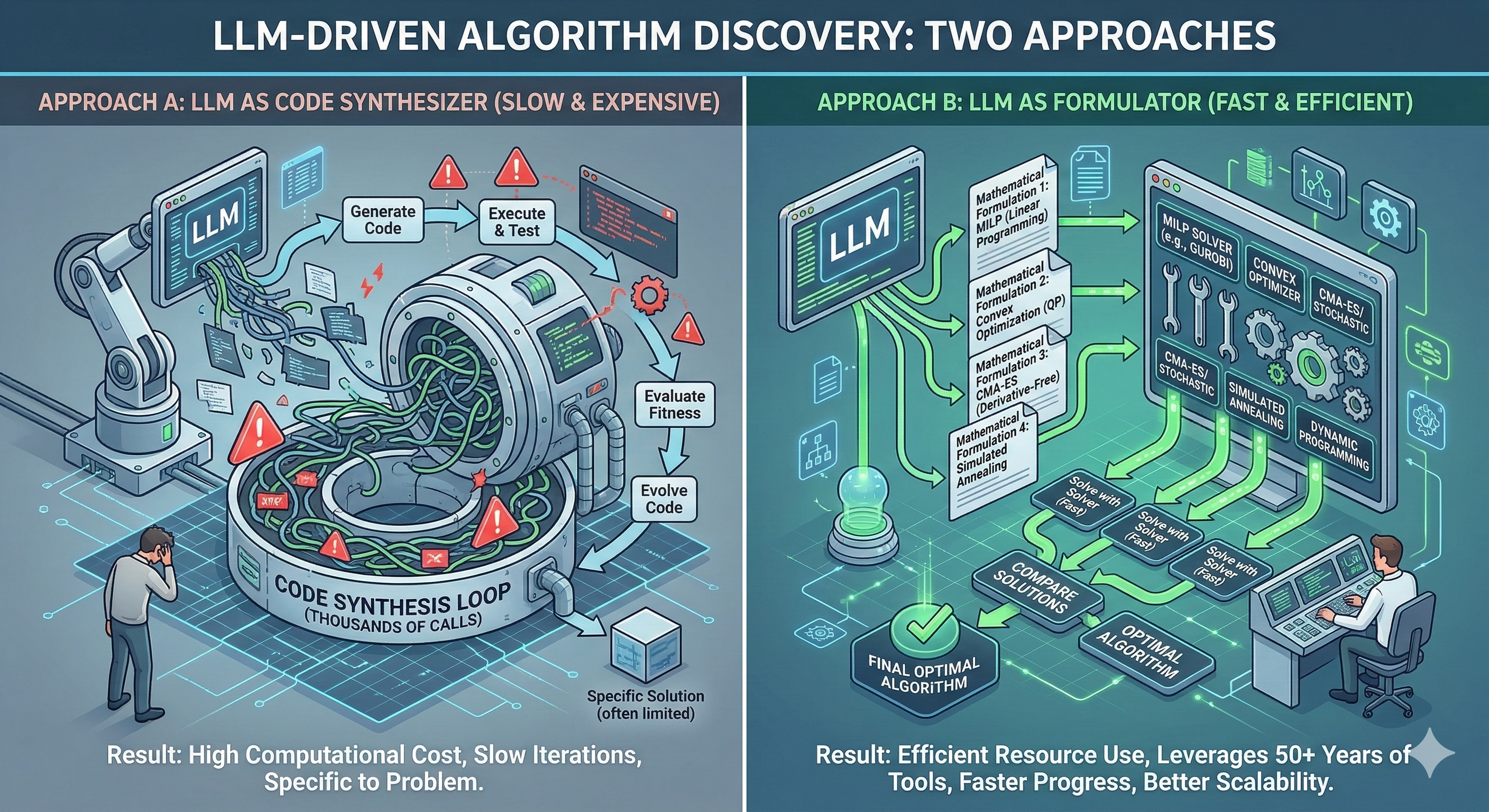

For those who aren’t familiar, AlphaEvolve is an “algorithm discovery” system. The idea is simple and powerful: use an LLM to generate candidate programs, evaluate them, and evolve better solutions over time.

It’s an exciting direction, and I completely understand the role of LLMs in such systems. LLMs make a lot of sense as planners: they can propose problem formulations, decompositions, heuristics, and search spaces.

But one thing keeps bothering me. In many examples, the optimization itself is performed through program synthesis — the LLM generates code that implicitly performs the search. And program synthesis is expensive. It requires a loop of generating code, executing it, evaluating the result, and repeating this process many times. That can easily mean thousands of model calls just to explore the search space.

A simpler alternative

There is a simpler and often cheaper alternative. Use the LLM once to generate several mathematical formulations of the problem, and then let classical optimizers do what they are designed to do.

We already have extremely powerful tools:

- Convex optimization

- MILP solvers

- CMA-ES

- Simulated annealing

- Reinforcement learning

- Dynamic programming

Instead of synthesizing programs that implicitly perform optimization, we could often do something simpler:

LLM → formulate the problem | Classical optimizer → solve it

A pattern from electrical engineering

In fact, this reminds me of a very common research pattern in electrical engineering and signal processing about 10–15 years ago. The typical recipe looked something like this:

- Model the problem

- Derive a tractable relaxation

- Solve it with a solver

- Bound the gap to the original problem

The parallel is striking: back then, the hard part was not solving the problem. It was formulating it correctly. The same might be true for LLMs today.

The real opportunity

LLMs could be incredibly useful in exactly that role. Instead of acting as optimizers, they could generate candidate formulations — possibly several of them — and let classical solvers compete.

In many cases this would likely be both cheaper and more efficient than large loops of program synthesis.

We spent more than 50 years building the toolbox of optimization. The real challenge might not be building new optimizers. It might be teaching LLMs to recognize which classical tool fits the problem.